Azure Files is a very convenient storage option when you need persistent state in Azure Kubernetes Service or Azure Red Hat OpenShift. It is cheap, it doesn’t count against the maximum number of disks that can be attached to each worker node, and it supports many pods mounting the same share at the same time. The one downside is it’s reduced performance when compared to other options (see my previous post on storage options for Azure Red Hat Openshift).

The Microsoft docs on Azure Red Hat OpenShift do not contain a lot of detail on storage options. And the Red Hat documentation on Azure Files only describes using statically created shares, but not how to make OpenShift create new ones. An alternative is looking at the AKS documentation on dynamic volumes with Azure Files, but often the question comes whether this works for OpenShift too. Well, here we go!

The first step is creating a storage class, that will tell OpenShift how to create volumes on Azure Files. Note that you don’t specify a storage account name, but just its SKU (Standard_LRS in this example, whether Standard or Premium has important performance implications) and location (taken from a variable in this example):

# Variables sc_name=myazfiles pvc_name=myshare app_name=azfilespod # Create SC cat <<EOF | kubectl apply -f - kind: StorageClass apiVersion: storage.k8s.io/v1 metadata: name: $sc_name provisioner: kubernetes.io/azure-file mountOptions: - dir_mode=0777 - file_mode=0777 - uid=0 - gid=0 - mfsymlinks - cache=strict - actimeo=30 - noperm parameters: skuName: Standard_LRS location: $location EOF

The mount options of the storage class are, as the name implies, optional (duh!). You can find more information about what each of the options actually does in the man page for mount.cifs. Especially the last option noperm is interesting: Azure Files can be a good candidate for the persistent storage required by Tekton-based OpenShift Pipelines, but without the noperm mount option the base repos cannot be cloned from the pipeline containers successfully, due to file permission errors.

The new storage class will be visible in the OpenShift console:

The azure-file provisioner will do certain things when a new share is required: it will create a new storage account, it will get its key (required for management of the storage account), and it will store both in a Kubernetes secret. All that using the service account persistent-volume-binder. One problem here is that this specific service account does not have enough privilege to create secrets in OpenShift, so you need to grant it enough privilege:

# To prevent error: User "system:serviceaccount:kube-system:persistent-volume-binder" # cannot create resource "secrets" in API group "" in the namespace "default" # See https://bugzilla.redhat.com/show_bug.cgi?id=1575933 cat <<EOF | kubectl apply -f - apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: system:controller:persistent-volume-binder namespace: default roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: system:controller:persistent-volume-binder subjects: - kind: ServiceAccount name: persistent-volume-binder EOF oc policy add-role-to-user admin system:serviceaccount:kube-system:persistent-volume-binder -n default

The next step is creating a Persistent Volume Claim (PVC), that will contain the information required to create a share using the storage class we created earlier:

# Create PVC

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: $pvc_name

spec:

accessModes:

- ReadWriteMany

storageClassName: $sc_name

resources:

requests:

storage: 1Gi

EOF

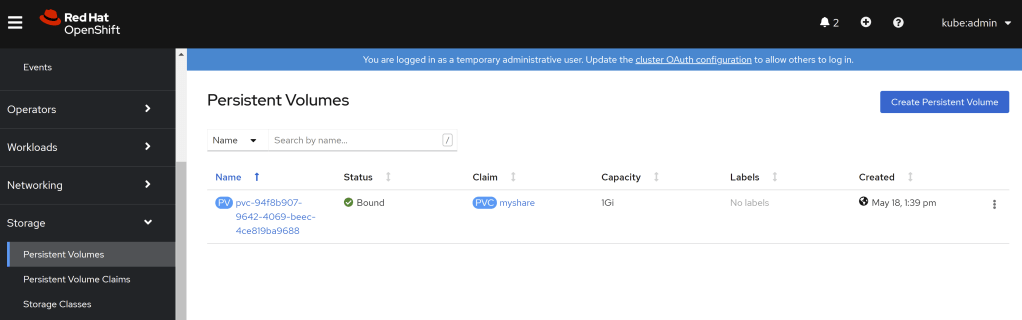

$ kubectl get pvc NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE myshare Bound pvc-94f8b907-9642-4069-beec-4ce819ba9688 1Gi RWX myazfiles 149m

Now we have all we need, and we can create a pod that mounts a volume that refers to the PVC we just created:

# Create pod

cat <<EOF | kubectl apply -f -

kind: Pod

apiVersion: v1

metadata:

name: $app_name

labels:

app: $app_name

spec:

containers:

- name: $app_name

image: erjosito/sqlapi:1.0

resources:

requests:

cpu: 100m

memory: 128Mi

limits:

cpu: 1000m

memory: 1024Mi

ports:

- containerPort: 8080

volumeMounts:

- mountPath: "/mnt/azure"

name: volume

volumes:

- name: volume

persistentVolumeClaim:

claimName: $pvc_name

---

apiVersion: v1

kind: Service

metadata:

labels:

app: $app_name

name: $app_name

spec:

ports:

- port: 8080

protocol: TCP

targetPort: 8080

selector:

app: $app_name

type: LoadBalancer

EOF

The pod will trigger the creation of a persistent volume (PV):

$ k get pv NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE pvc-94f8b907-9642-4069-beec-4ce819ba9688 1Gi RWX Delete Bound default/myshare myazfiles 149m

And this volume will be backed by an Azure Files share. You can see in the following code how you can get the storage account name and its key to see the new share:

$ secret_name=$(kubectl get pv -o json | jq -r '.items[0].spec.azureFile.secretName') $ storage_account_name=$(k get secret $secret_name -o json | jq -r '.data.azurestorageaccountname' | base64 -d) $ storage_account_key=$(k get secret $secret_name -o json | jq -r '.data.azurestorageaccountkey' | base64 -d) $ az storage account show -n $storage_account_name -o table AccessTier CreationTime EnableHttpsTrafficOnly Kind Location Name PrimaryLocation ProvisioningState ResourceGroup StatusOfPrimary ------------ -------------------------------- ------------------------ --------- ----------- ----------------------- ----------------- ------------------- --------------- ----------------- Hot 2021-05-18T10:56:40.973673+00:00 True StorageV2 northeurope fce5e070a7f4d48c7b7e659 northeurope Succeeded aro-17037 available $ az storage share list --account-name $storage_account_name --account-key $storage_account_key -o table Name Quota Last Modified ---------------------------------------------------------- ------- ------------------------- aro-mxmn9-dynamic-pvc-94f8b907-9642-4069-beec-4ce819ba9688 1 2021-05-18T11:39:50+00:00

We can verify that the share has been mounted with 777 permissions (read/write for everybody), as defined in the storage class:

$ kubectl exec $app_name -- bash -c 'ls -ald /mnt/*' drwxrwxrwx. 2 root root 0 May 18 11:39 /mnt/azure

You can easily create a new file in the share:

$ kubeclt exec $app_name -- bash -c 'touch /mnt/azure/helloword.txt'

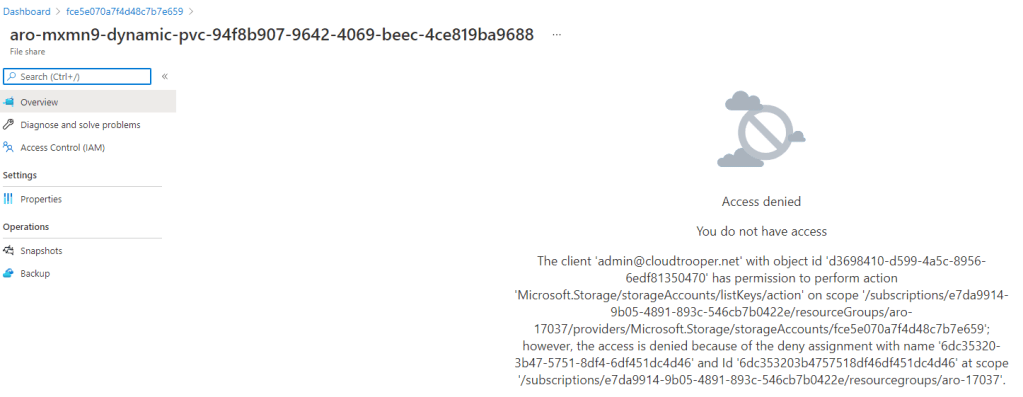

If you try to verify the existence of the new file over the Azure portal, you will be disappointed. The reason is because the portal uses AAD authentication to access the share, and since the share is in the node resource group of the cluster, you don’t have enough permissions. Even if you are the owner of the subscription:

However, you can get file information with storage account key, which we have from the Kubernetes secret. And now that we are at it, you can get the share name from the secret too. As you can see, our helloworld.txt file is right there:

$ share_name=$(kubectl get pv -o json | jq -r '.items[0].spec.azureFile.shareName') $ az storage file list --share-name $share_name --account-name $storage_account_name --account-key $storage_account_key -o table Name Content Length Type Last Modified ------------- ---------------- ------ --------------- helloword.txt 0 file

We configured a service for our pod, because the deployed image offers some interesting API endpoints that can be useful for testing:

$ kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE azfilespod LoadBalancer 172.30.76.15 20.82.201.44 8080:31155/TCP 3h35m kubernetes ClusterIP 172.30.0.1 <none> 443/TCP 4h54m openshift ExternalName <none> kubernetes.default.svc.cluster.local <none> 4h49m

One of those endpoints allow for some coarse I/O benchmarking, based on a single file (which as you can see, is located below the mount path of hte share /mnt/azure/):

❯ curl 'http://20.82.201.44:8080/api/ioperf?size=512&file=%2Fmnt%2Fazure%2Fiotest&writeblocksize=16384&readblocksize=16384'

{

"Filepath": "/mnt/azure/iotest",

"Read IOPS": 2.0,

"Read bandwidth in MB/s": 35.42,

"Read block size (KB)": 16384,

"Read blocks": 32,

"Read time (sec)": 14.45,

"Write IOPS": 6.0,

"Write bandwidth in MB/s": 103.18,

"Write block size (KB)": 16384,

"Write time (sec)": 4.96,

"Written MB": 512,

"Written blocks": 32

}

For more realistic benchmarks you can exec into the container, install fio (apt install -y fio) and run some tests with multiple threads:

root@azfilespod:/mnt/azure# fio --name=8krandomreads --rw=randread --direct=1 --ioengine=libaio --bs=256k --numjobs=4 --iodepth=128 --size=128M --runtime=600 --group_reporting [48/1984]8krandomreads: (g=0): rw=randread, bs=(R) 256KiB-256KiB, (W) 256KiB-256KiB, (T) 256KiB-256KiB, ioengine=libaio, iodepth=128

...

fio-3.1 Starting 4 processes

Jobs: 4 (f=4): [r(4)][57.1%][r=131MiB/s,w=0KiB/s][r=524,w=0 IOPS][eta 00m:03s] 8krandomreads: (groupid=0, jobs=4): err= 0: pid=1682: Wed May 19 07:52:32 2021

read: IOPS=426, BW=107MiB/s (112MB/s)(512MiB/4806msec)

slat (usec): min=6, max=228188, avg=8012.38, stdev=21899.05

clat (msec): min=260, max=1879, avg=1065.97, stdev=333.83

lat (msec): min=260, max=1882, avg=1073.98, stdev=335.66

clat percentiles (msec):

| 1.00th=[ 284], 5.00th=[ 477], 10.00th=[ 617], 20.00th=[ 776],

| 30.00th=[ 894], 40.00th=[ 986], 50.00th=[ 1099], 60.00th=[ 1167],

| 70.00th=[ 1284], 80.00th=[ 1368], 90.00th=[ 1485], 95.00th=[ 1552],

| 99.00th=[ 1687], 99.50th=[ 1737], 99.90th=[ 1804], 99.95th=[ 1821],

| 99.99th=[ 1888]

bw ( KiB/s): min= 3072, max=56320, per=23.55%, avg=25689.43, stdev=13526.45, samples=30

iops : min= 12, max= 220, avg=100.30, stdev=52.82, samples=30

lat (msec) : 500=7.81%, 750=10.16%, 1000=23.39%, 2000=58.64%

cpu : usr=0.10%, sys=2.40%, ctx=27190, majf=0, minf=2140

IO depths : 1=0.2%, 2=0.4%, 4=0.8%, 8=1.6%, 16=3.1%, 32=6.2%, >=64=87.7%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=99.7%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.3%

issued rwt: total=2048,0,0, short=0,0,0, dropped=0,0,0

latency : target=0, window=0, percentile=100.00%, depth=128

Run status group 0 (all jobs):

READ: bw=107MiB/s (112MB/s), 107MiB/s-107MiB/s (112MB/s-112MB/s), io=512MiB (537MB), run=4806-4806msec

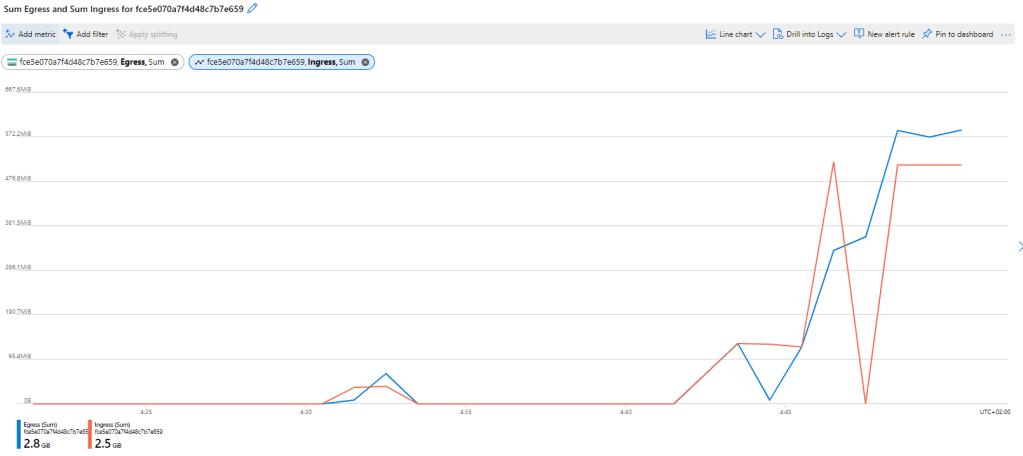

You can see the increased activity in the share in the Azure Portal, to verify that we are indeed testing against Azure Files:

And here concludes this post, I hope you got an idea of how to get uncomplicated persistent storage in Azure Red Hat OpenShift, if your performance requirements can be satisfied by Azure Files.