There are a couple of ways in which you can deploy NVAs in Azure, from a redundancy perspective:

- 1+1 (active/passive): least scalable solution, your maximum throughput will be equivalent of the one of the active NVA, while you normally have to pay for 2 VMs and 2 NVA licenses

- 1+1 (active/active): 2 NVAs forwarding traffic at all time, hence fully leveraging the cost of the NVAs. If one of them fails, the system will continue to run at 50% performance. If the performance of the 2 combined NVAs is not enough, scaling up is again the only option. This often needs to overprovisioning and unnecessary costs

- N (active/active): this is the most recommended pattern in cloud, since it allows to scale in or out depending of whether more or less performance is required, offering more granular scalability and cost control. Azure has a native construct for this type of systems, called Virtual Machine Scale Set

On the other hand, you have probably heard of Azure Route Server by now: it allows Network Virtual Appliances (NVAs) to send and receive routes to Azure Networking, so that they can insert themselves automatically in the data path.

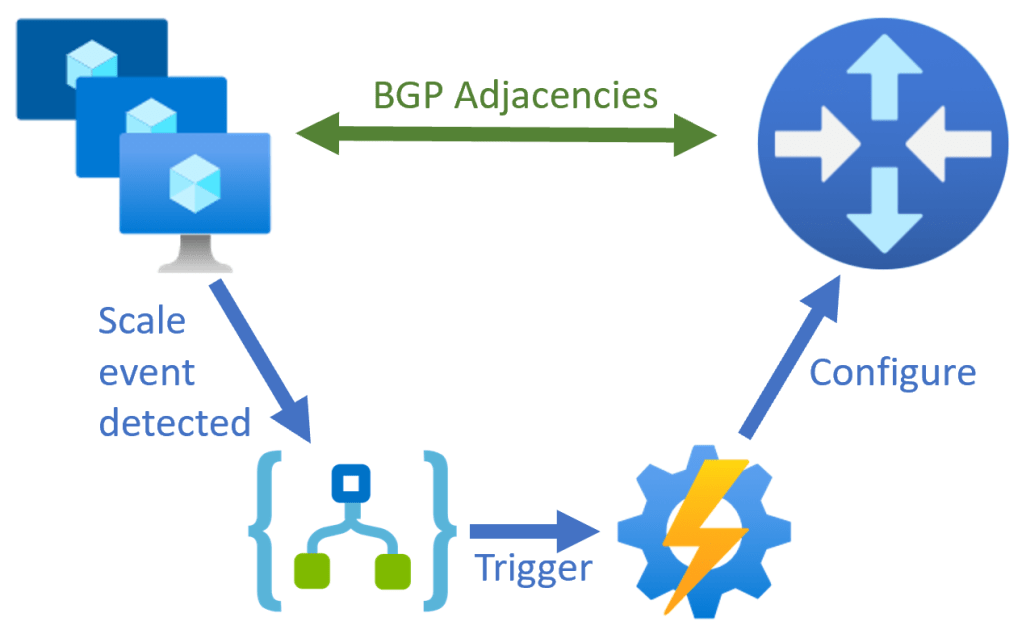

In this post we are going to focus on one particular challenge of deploying multiple NVAs: the Azure Route Server needs to know whether there are 1, 2 or N NVA instances, since it has to establish BGP adjacencies to each and every one of them. You don’t want to rely on an administrator manually configuring those adjacencies, so is there a way of automating this? You bet there is!

The next diagram describes the overall architecture that I have tested. I have put most of the code in my Github repository here, but some items which are not automated in that script yet (like the creation of the Logic App and the Automation Account). The NVAs are Ubuntu VMs with the BIRD BGP software running as a Virtual Machine Scale Set:

The easy part is creating the NVAs so that they try to connect to the Azure Route Server, since the Route Server peering IP addresses should stay constant. In my case, I will be using a Virtual Machine Scale Set based on the Ubuntu image, where a cloud init file will install and configure the BIRD software package with BGP functionality. After deploying the Route Server and finding out its two peering IP addresses, this is what the VMSS cloud init file will look like:

#cloud-config

packages:

- bird

runcmd:

- sysctl -w net.ipv4.ip_forward=1

- sysctl -w net.ipv4.conf.all.accept_redirects = 0

- sysctl -w net.ipv4.conf.all.send_redirects = 0

- iptables -t nat -A POSTROUTING ! -d '10.0.0.0/8' -o eth0 -j MASQUERADE

- sysmtemctl restart bird

write_files:

- content: |

log syslog all;

protocol device {

scan time 10;

}

protocol direct {

disabled;

}

protocol kernel {

preference 254;

learn;

merge paths on;

import filter {

reject;

};

export filter {

reject;

};

}

protocol static {

import all;

# Default route

route 0.0.0.0/0 via 10.1.2.1;

# Vnet prefix to cover the RS' IPs

route 10.1.0.0/16 via 10.1.2.1;

}

protocol bgp rs0 {

description "RouteServer instance 0";

multihop;

local as 65001;

neighbor 10.1.1.4 as 65515;

import filter {accept;};

export filter {accept;};

}

protocol bgp rs1 {

description "Route Server instance 1";

multihop;

local as 65001;

neighbor 10.1.1.5 as 65515;

import filter {accept;};

export filter {accept;};

}

path: /etc/bird/bird.conf

This approach wouldn’t be valid of course in a production environment, you want to have further configuration possibilities, rather than via the cloud init file, but it suits me for this test.

Check the reference code for details on how to generate automatically this cloud init file. You will find as well the full JSON definition of the Logic App, as well of the PowerShell script for reconfiguring the Route Server.

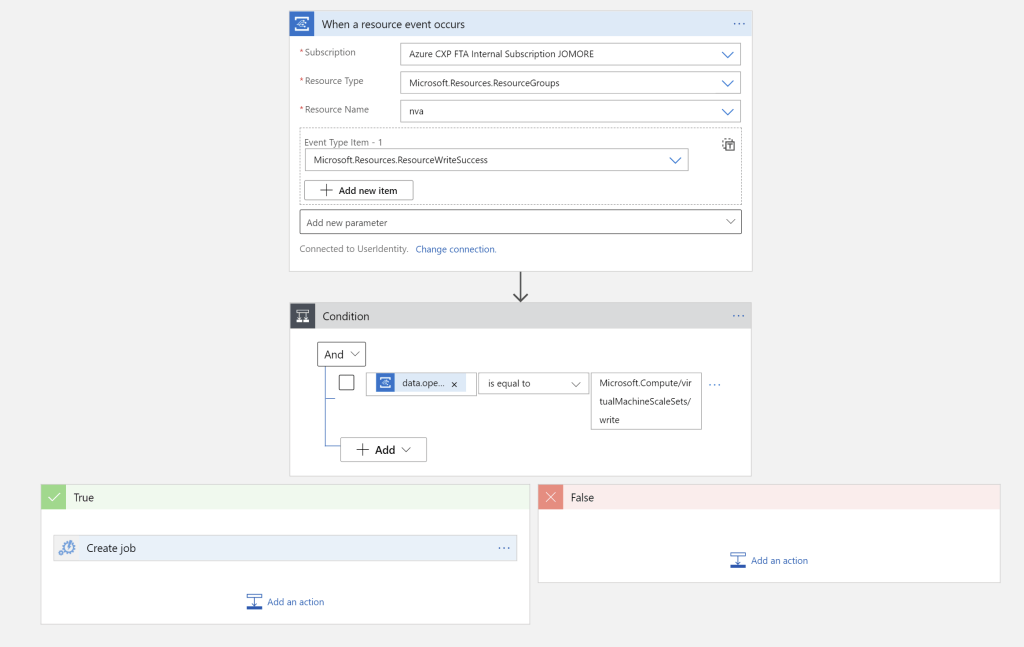

After booting and running the cloud-init script, the NVA VMSS instances will try to reach out to the Route Server, but the Route Server will not know them unless a peering has been defined for each of them. Logic Apps can be triggered upon ARM events, whenever something happens in our resource group, and an additional condition check will verify that that “something” is a modification to the VMSS (MicrosoftCompute.virtualMachineScaleSets/write operation).

This trigger will not only include scale in/out events, but any modification to any VMSS in the resource group, so the triggered script needs to support calls that will not require any new configuration (it should be idempotent). This what the Logic App looks like in the graphical editor:

The Logic App can use different authentication types, in my case I have used user managed identities (in preview at the time of this writing). As per my testing, these are the two role assignments that would be required:

- Microsoft.EventGrid/eventSubscriptions/write (at the resource group level): it will automatically create and subscribe to an EventGrid topic for changes in resources of that group

- Microsoft.Automation/automationAccounts/jobs/write (at the automation account level): it wil execute Azure Automation Runbooks

After the condition is verified, a job is created in Azure automation, which will execute this script:

# Parameters

param(

[Parameter(mandatory = $false)]

[string]$ConnectionAssetName = "AzureRunAsConnection",

[Parameter(mandatory = $false)]

[string]$RouteServerName = "rs",

[Parameter(mandatory = $false)]

[string]$RouteServerRG = "nva",

[Parameter(mandatory = $false)]

[string]$VmssName = "nva",

[Parameter(mandatory = $false)]

[string]$VmssRG = "nva",

[Parameter(mandatory = $false)]

[string]$BgpAsn = "65001",

[Parameter(mandatory = $false)]

[string]$TenantId = "ecd38d6d-544b-494c-9b29-ff3d6a31c040"

)

# Debug info

Write-Output "Running with parameters: ConnectionAssetName = $ConnectionAssetName, RouteServerName = $RouteServerName, VmssName = $VmssName, VmssRG = $VmssRG, TenantId = $TenantId"

# Authentification using Azure Automation connections

$Connection = Get-AutomationConnection -Name $ConnectionAssetName

if ($Connection) {

Write-Output "Connection $ConnectionAssetName found"

} else {

Write-Output "Connection $ConnectionAssetName not found, exiting"

exit

}

# The TenantID can be supplied over a parameter

$AzAuthentication = Connect-AzAccount -ServicePrincipal `

-TenantId $TenantId `

-ApplicationId $Connection.ApplicationId `

-CertificateThumbprint $Connection.CertificateThumbprint

# Verify authentication

if (!$AzAuthentication) {

Write-Output "Failed to authenticate Azure: $($_.exception.message)"

exit

} else {

$SubscriptionId = $(Get-AzContext).Subscription.Id

Write-Output = "Authentication as service principal for Azure successful on subscription $SubscriptionId."

}

# Get VMSS in Resource Group

$VMSS = Get-AzVmss -Name $VmssName -ResourceGroupName $VmssRG

Write-Output "VMSS found with ID $VMSS.Id"

# Get Instance IPs

Write-Output "Getting VMSS instances..."

$VMs = Get-AzVmssVM -VMScaleSetName $VmssName -ResourceGroupName $VmssRG

$VmssIPs = @()

$VmssNames = @{}

foreach ($VM in $VMs)

{

Write-Output "Processing instance $VM.Name..."

$NicId = $VM.NetworkProfile.NetworkInterfaces[0].Id

Write-Output "Instance has NIC ID $NicId"

$NIC = Get-AzResource -Id $NicId -ExpandProperties

$IP = $NIC.Properties.ipConfigurations[0].properties.privateIPAddress

Write-Output "VMSS instance has IP address $IP"

$VmssIPs += $IP

$VmssNames[$IP] = $VM.Name

}

# Get Route Server adjacencies

$PeeringIPs = @()

$PeeringNames = @{}

Write-Output "Looking for Route Server $RouteServerName..."

$RSId = $(Get-AzResource -Name $RouteServerName -ResourceType Microsoft.Network/virtualHubs -ResourceGroupName $RouteServerRG).Id

Write-Output "Found RS with ID $RSId"

$RSuri = "${RSId}/bgpConnections?api-version=2021-02-01"

$Peerings = $(Invoke-AzRest -Method GET -Path $RSuri).content | ConvertFrom-Json

foreach ($Peering in $Peerings.value)

{

$PeerIP = $Peering.properties.peerIp

Write-Output "Found Route Server peering to $PeerIP"

$PeeringIPs += $PeerIP

$PeeringNames[$PeerIP] = $Peering.name

$PeerProvisioningState = $Peering.properties.provisioningState

# Exit if there was a peering in Updating state

if ($PeerProvisioningState -eq "Updating") {

Write-Output "Peering to $PeerIP is in Updating state, exiting to avoid uncontrolled concurrent operations"

exit

}

}

# See whether any peering is missing

foreach ($VmssIP in $VmssIPs) {

if ($VmssIP -in $PeeringIPs) {

Write-Output "Peering to $VmssIP already exists"

} else {

Write-Output "Peering to $VmssIP needs to be created"

$PeerName = $VmssNames[$VmssIP]

$PeerJson = '{"name": "' + $PeerName + '", "properties": {"peerIp": "' + $VmssIP + '", "peerAsn": "' + $BgpAsn + '"}}'

$PeerUri = "${RSId}/bgpConnections/${PeerName}?api-version=2021-02-01"

Write-Output "Creating Route Server peering $PeerName for IP $VmssIP and ASN $BgpAsn..."

Invoke-AzRest -Method PUT -Path $PeerUri -Payload $PeerJson

# Wait until the provisioning state of the new peering is Succeeded/Failed

Write-Output "Waiting for peering $PeerName to finish creation..."

$i = 0

Do {

$PeeringState = $($(Invoke-AzRest -Method GET -Path $PeerUri).content | ConvertFrom-Json).properties.provisioningState

$i += 1

Start-Sleep -s 15 # Wait 15 seconds between each check

} While ($PeeringState -eq "Updating")

$i = $i * 15

Write-Output "Peering $PeerName provisioning state is $PeeringState, wait time $i seconds"

}

}

# See whether any peering should be deleted

foreach ($PeeringIP in $PeeringIPs) {

if ($PeeringIP -in $VmssIPs) {

Write-Output "Instance $PeeringIP still exists"

} else {

Write-Output "Instance $PeeringIP does not exist any more, peering needs to be deleted"

$PeerName = $PeeringNames[$PeeringIP]

$PeerUri = "${RSId}/bgpConnections/${PeerName}?api-version=2021-02-01"

Write-Output "Deleting Route Server peering $PeerName..."

Invoke-AzRest -Method DELETE -Path $PeerUri

# Wait until the deleting of the peering is finished

Write-Output "Waiting for peering $PeerName to finish deletion..."

$i = 0

Do {

Try {

$PeeringState = $($(Invoke-AzRest -Method GET -Path $PeerUri).content | ConvertFrom-Json).properties.provisioningState

} Catch {

$PeeringState = ""

}

$i += 1

Start-Sleep -s 15 # Wait 15 seconds between each check

} While ($PeeringState -eq "Deleting")

$i = $i * 15

Write-Output "Peering $PeerName is deleted (state is $PeeringState), wait time $i seconds"

}

}

I had some errors with the PowerShell commands for the Azure Route Server (they will probably get fixed by the time it hits General Availability), so I decided to use the REST API to interact with it.

Something else worthy to be noted is that peering creation or deletion operations cannot be concurrent, so you need to wait for one operation to finish before starting the next. As a consequence, the script can take a while to run if it needs to create/delete a number of peerings, and you want to check the “Wait for Job” setting in the Logic App “Create job” step to False.

Another point is authentication in the script: if you create the Azure Automation account with the CLI, there is not a pre-built connection to interact with Azure. If you create it with the Azure portal, you have an option to create a default “AzureRunAsConnection” service principal. And since you need the portal any way to install the required modules for this script (Az.Accounts, Az.Resources, Az.Network and Az.Compute), I went with the portal for this (heresy!).

As you can see, the script builds two lists: one with the IP addresses of the existing NVA instances, and another one with the IP addresses of the Route Server peerings, and it will try to reconciliate both of them. Here a sample output of a scale in event where the NVA VMSS went down from 3 to 2 instances, so the script removed a peering from the Route Server:

Peering to 10.2.10.6 already exists

Peering to 10.2.10.5 already exists

Instance 10.2.10.6 still exists

Instance 10.2.10.5 still exists

Instance 10.2.10.7 does not exist any more, peering needs to be deleted

Deleting Route Server peering nva_3...

Headers : {[Pragma, System.String[]], [Retry-After, System.String[]], [x-ms-request-id, System.String[]],

[Azure-AsyncOperation, System.String[]]...}

Version : 1.1

StatusCode : 202

Method : DELETE

Content :

Let’s try this out: if we scale our VMSS to 8 instances (this is the maximum number of BGP peerings supported by the Azure Route Server at the time of this writing):

az vmss scale -n $nva_name -g $rg --new-capacity 8

After some time, the Logic App will have scaled the Azure Route Server to 8 peerings:

$ az network routeserver peering list --routeserver rs -g nva -o table Name PeerAsn PeerIp ProvisioningState ResourceGroup ------ --------- --------- ------------------- --------------- nva_1 65001 10.1.2.5 Succeeded nva nva_3 65001 10.1.2.7 Succeeded nva nva_4 65001 10.1.2.8 Succeeded nva nva_5 65001 10.1.2.9 Succeeded nva nva_6 65001 10.1.2.10 Succeeded nva nva_7 65001 10.1.2.11 Succeeded nva nva_9 65001 10.1.2.13 Succeeded nva nva_10 65001 10.1.2.4 Succeeded nva

The subnets in the Virtual Network (where I have a test VM deployed) will get 8 routes, across which traffic will be load-balanced:

$ az network nic show-effective-route-table --ids $azurevm_nic_id -o table Source State Address Prefix Next Hop Type Next Hop IP --------------------- ------- ------------------ --------------------- ------------- Default Active 10.1.0.0/16 VnetLocal VirtualNetworkGateway Active 0.0.0.0/0 VirtualNetworkGateway 10.1.2.5 VirtualNetworkGateway Active 0.0.0.0/0 VirtualNetworkGateway 10.1.2.7 VirtualNetworkGateway Active 0.0.0.0/0 VirtualNetworkGateway 10.1.2.8 VirtualNetworkGateway Active 0.0.0.0/0 VirtualNetworkGateway 10.1.2.9 VirtualNetworkGateway Active 0.0.0.0/0 VirtualNetworkGateway 10.1.2.10 VirtualNetworkGateway Active 0.0.0.0/0 VirtualNetworkGateway 10.1.2.11 VirtualNetworkGateway Active 0.0.0.0/0 VirtualNetworkGateway 10.1.2.13 VirtualNetworkGateway Active 0.0.0.0/0 VirtualNetworkGateway 10.1.2.4 User Active 109.125.124.132/32 Internet

That last User route to 109.125.124.132 sending traffic to my machine to the Internet, so that I can connect from my laptop to the test VM.

To perform a final check, we can verify that the traffic from the VM is going to Internet through the NVAs:

$ ssh $azurevm_pip_ip "curl -s4 ifconfig.co" 104.46.55.153

And 104.46.55.153 is the public IP address assigned to the NVA VMSS, not the public IP of the VM:

az network public-ip list -o table -g $rg Name ResourceGroup Location Zones Address AddressVersion AllocationMethod IdleTimeoutInMinutes ProvisioningState ------------- --------------- ---------- ------- ------------- ---------------- ------------------ ---------------------- ------------------- azurevm-pip nva westeurope 13.81.35.89 IPv4 Dynamic 4 Succeeded nvaLBPublicIP nva westeurope 104.46.55.153 IPv4 Dynamic 4 Succeeded

Scaling down the VMSS from 8 to 4 instances will trigger the script again, which will reconfigure the Azure Route Server by eliminating the unneeded BGP peerings:

$ az network routeserver peering list --routeserver rs -g nva -o table Name PeerAsn PeerIp ProvisioningState ResourceGroup ------ --------- --------- ------------------- --------------- nva_1 65001 10.1.2.5 Succeeded nva nva_3 65001 10.1.2.7 Succeeded nva nva_4 65001 10.1.2.8 Succeeded nva nva_6 65001 10.1.2.10 Succeeded nva

And that concludes this post. We have seen how to use Logic Apps to react upon changes in a VMSS-based NVA and automatically adapt the configuration of the Route Server. This provides multiple NVAs for traffic load sharing in an Azure environment with the dynamic behavior of BGP. Thanks for reading!

[…] using Azure automation constructs such as Logic Apps and Azure Automation (see my previous blog Azure Route Server and NVAs on scale sets), in this case I went for a more self-contained solution, where each instance itself can […]

LikeLike

[…] https://blog.cloudtrooper.net/2021/05/31/azure-route-server-and-nvas-running-on-scale-sets/ […]

LikeLike

Hi i saw your post, just one question

Why would you create a script for the bgp peerings instead of just creating all the 8 peers and just wait for them to connect? you can expect the IP address by limiting the subnet size!

LikeLike

Good point Gabriel. You would need a /28 subnet, which in Azure gives room for 11 IPs. You could somehow block 3, so that the remaining 8 are deterministic. One downside is that a fully-grown 8-node cluster couldn’t be updated by adding a 9th node, but instead you would have to go down first. However, the approach of preconfiguring the BGP peers saves so much complexity, that a couple of downsides could be accepted.

LikeLike