Azure PaaS service networking is quite a complex landscape to navigate. Documentation in Azure about this topic is located in different areas (under Networking and each specific PaaS service), and sometimes using inconsistent terminology. My goal in this blog post is setting a classification of PaaS services that can be used to navigate this complexity.

By the way, I will try to keep this post as a generic perspective on the different options to network Azure managed services, as well as to include examples on a couple of multi-service architectures. If you want a deep dive on topics like VNet injection or a comparison between different access possibilities, do not miss this post by the great Federico Guerrini.

I should start with the beginning. What is a PaaS service, in Azure parlance? It is a “managed” service, meaning something that Microsoft manages for you, opposed to a Virtual Machine where you would deploy your own software and configure it yourself.

These services can be classified for connectivity purposes in multitenant vs single-tenant services. For single-tenant services they can be deployed in customer-managed VNets or in Microsoft-managed VNets. The rest of the post will describe all types using a consistent language and diagram notation.

Multitenant services

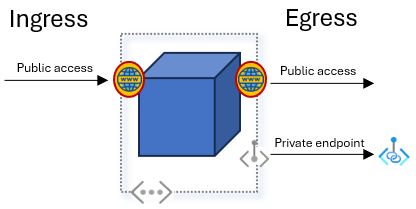

These are the classical services that come to mind when thinking about public cloud: Azure Storage, Azure SQL, Azure Key Vault, etc. The following diagram depicts the different possibilities for ingress and egress communication in multitenant services:

By the way, I will be using these icons along this post:

Let’s start with the ingress options:

- Public access: that is the easy one, most PaaS services will provide a URL that resolves to a public IP address, so that it can be accessed from anywhere with Internet connectivity.

- Service endpoints: as explained here, service endpoints are a way of restrict access to a multitenant service so that only certain Azure subnets can connect to it. Service endpoints have some good and bad things, but most customers have pivoted to the next option for private connectivity (if you want to read a discussion about Service Endpoints vs Private Endpoints there is quite a lot of blogs out there, for example this one from Jeff Brown).

- Private Link: Private Link is the most commonly option used to connect to PaaS services privately. It is essentially a client/server technology, where the server part is called Private Link Service (and in the case of PaaS services is entirely managed by Microsoft), and the client part is called Private Endpoint, and lives in an Azure Virtual Network.

And now to the egress options:

- Public access: if the PaaS service needs some backend connectivity, per default it will use some public IP addressing to communicate over the Internet (or Microsoft’s backbone).

- VNet integration: some services (most notably Azure App Services) support sourcing egress traffic from an Azure VNet, so that the PaaS service will gain access to the private network of an organization.

- Private endpoint: if there is something providing a private link service, some PaaS services support the creation of a managed private endpoint that will connect to it.

What does “multitenant” actually mean here?

Good question, because this adjective doesn’t always apply 100% to the services involved. If you look at the canonical example of Azure Storage, it is deployed on Microsoft-managed hardware, and multiple IP address can share the same public IP address. That is multitenant alright!

But now let’s look at the basic implementation of a Web App (no private endpoint, no VNet integration) on a Service Plan with a “Dedicated” SKU. Well, is this multitenant? I would argue that it still is. Even if you get dedicated resources (the compute VMs), there is a lot of control plane components around the service that you don’t get to see (anybody that has deployed ASEv2 knows what I am talking about), which might or might not be shared. Hence, in this post I will still define as multitenant services that even if providing certain resources, (App Services compute, Service Bus premium, etc), they are still not deployed inside of your Virtual Network.

Security controls for multitenant services

Ingress

- When using public IP address:

- Firewall rules

- Trusted services

- When using Private Link:

- Network Security Groups (NSGs) applied to the private endpoint subnet

- Sending traffic to the private endpoint through a Network Virtual Appliance

- When using VNet service endpoints:

- Specific Azure subnets

For example, looking at the configuration for an Azure Storage account, you can notice a couple of things:

- The traffic filter rules are only relevant for public network access, not for access over private link.

- There are two types of traffic filter rules: VNet/subnet-based (effective for access over service endpoints) and IP address-based.

- Even if you don’t allow any public IP address to access the Azure Storage account, certain specific services (where their managed identity has some role over the storage account) will still be able to connect to it, if you enable the checkbox “Allow Azure services on the trusted service list to access this storage account”.

Egress

- When using VNet integration:

- NSGs applied to the VNet when using VNet integration

- When using Private Link:

- Since the private endpoint is managed by the service, you cannot configure NSGs there.

- When using public IP:

- Some multitenant services support native egress filtering (see for example Outbound firewall rules for Azure SQL Database and Azure Synapse Analytics)

Multitenant service examples

Let’s try to make the previous theoretical dissertation real. For example: what are the connectivity options that Azure Storage offers? Glad you ask:

Egress access from Azure Storage to other services is rather limited, but it exists indeed. One example is when it connects to Azure Key Vault to retrieve encryption keys.

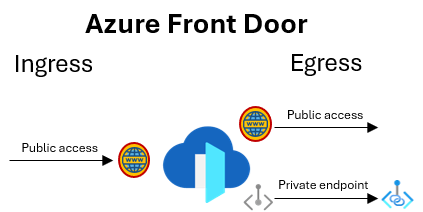

Let’s try another Azure Front Door:

Front Door is one of the services that offers managed private endpoints to privately connect to resources deployed in Azure VNets.

Chaining multitenant services with each other

What does a real chain of PaaS services look like? For example, we can take a classical architecture with Azure Front Door, a web application in App Services, and an Azure SQL backend:

As you can see, the communication between App Services and Azure SQL has been “stitched” using a Virtual Network. However, securing direct service-to-service communication is still possible. Even if their public IP addresses are used, network communication is strongly secured using the “Trusted Services” concepts, best explained in Azure Storage documentation. For example, you can read here the announcement from 2019 when Data Factory started supporting this security mechanism for multitenant Integration Runtimes:

The “trusted services” mechanism uses authentication information to provide network access. Hence, until you don’t authorize a service to access another (using managed identities and RBAC role assignments), those two services will not have network connectivity between them. In later sections I will describe available security controls for each topology more in detail.

Single-tenant services

Other Azure services are single-tenant, and can consequently be deployed in Virtual Networks with the pattern that Microsoft normally refers to as “VNet injection”. This diagram shows the possible connectivity options available for single-tenant services:

The ingress options are the following:

- Public access: most frequently, VNet-injected single-tenant services will require one or more public IP addresses to be allocated to them. These IP addresses will be used for the data plane and for the control plane. The fact that the service is “managed” means that Microsoft needs to somehow connect to it to perform configuration and monitoring tasks.

- Private access: since the service is in the Virtual Network, it will receive an IP address and be accessible over it.

- Private endpoints: some PaaS services that are deployed in Virtual Networks offer the possibility of connecting to them via Private Link. One example is Azure Application Gateway.

And for egress:

- Public access: for egress communication (data or control plane), the service will typically source these flows from its public IP address.

- Private access: of course, the fact that the service is deployed inside of a virtual network means that the service can access everything connected to that VNet, including on-premises networks.

- Private endpoint: if private endpoints exist in the network, the service will be able to communicate with those.

Managed Virtual Network

One consequence of deploying a PaaS service into a virtual network is that you need to administrate the configuration of that virtual network, which could bring some complexities along. For example, you need to make sure that you don’t break the service’s control plane with your Network Security Groups or with your User-Defined Routes.

In order to alleviate this administrative overhead, some Azure services offer single-tenant services in a “managed” virtual network, where these services are deployed in a virtual network owned by Microsoft. Microsoft will create a VNet somewhere, with some IP addresses, and you will not be able to peer your VNets to it. Consequently, you will have no visibility whatsoever of the private IP addresses in there, and you will be able to communicate with it either through its public IP addresses or via Private Link:

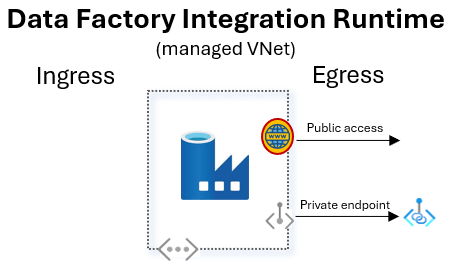

We can take the example of Azure Data Factory single-tenant Integration Runtimes, which only require egress access:

Since the virtual network is managed by Microsoft, you will not be able to peer with it, so that the single-tenant Integration Runtime will not be able to connect to your data sources via its private IP address. However, most services that are deployed in this manner support “managed private endpoints” that can connect to private link services provided by either yourself (from one of your Load Balancers) or another PaaS service.

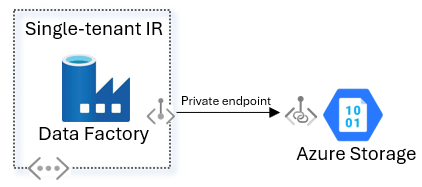

Looking at the previous communication example between integration runtimes and Azure Storage, this is what it would look like using single-tenant runtimes in a managed VNet:

Here both the private endpoint (inside of Data Factory’s managed virtual network) and the private link service (part of Azure Storage) are managed by Microsoft.

Security controls for single-tenant services (VNet-injected)

Ingress

- Customer-managed VNet:

- When using public/private IP address:

- Network Security Groups

- When using Private Link:

- Network Security Groups applied to the Private Endpoint subnet

- When using public/private IP address:

- Microsoft-managed VNet:

- NSGs will not work here, since you don’t have access to the Microsoft-managed VNet.

Egress

- Customer-managed VNet:

- When using public/private IP addressing or Private Link:

- Network Security Groups

- When using public/private IP addressing or Private Link:

- Microsoft-managed VNet:

- Data Exfiltration Protection: here again as a user you don’t have access to configured NSGs, but some managed-VNet-injected services support a feature which is comparable to a “managed NSG”. For example, for Synapse you can read more about it in Data exfiltration protection for Azure Synapse Analytics workspaces.

Single-tenant service examples

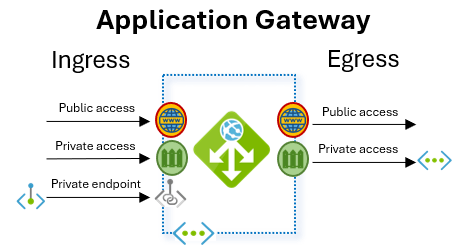

One of the most archetypical examples of single-tenant services is Azure Application Gateway:

For ingress, you can access an Application Gateway over its public or its private address. Besides, you can connect to it using private endpoints.

The Application Gateway will then send egress traffic to endpoints that can be reachable over its private IP address (other Azure virtual machines, on-premises systems) or via its public IP address (in the Internet).

Complex PaaS services

What about more complex services, that are composed of multiple elements? How to secure the whole service? You will have to understand each of those components, what deployment mechanisms they support, and how to best secure communications between them as per the previous section.

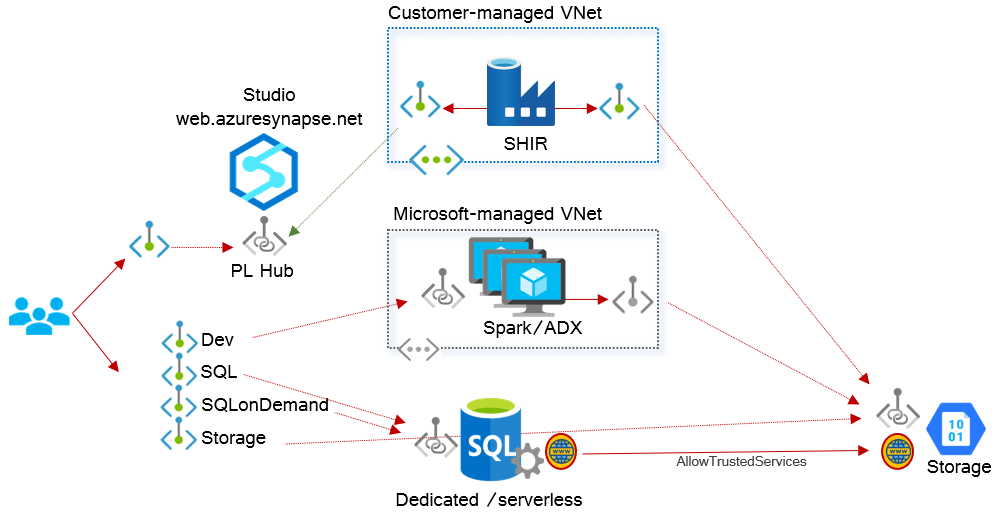

For example, if we take Synapse, there are quite some moving parts in there (you can check this awesome post by Benjamin Leroux to learn more):

- There is a user portal and control plane element (

https://web.azuresynapse.net). - Synapse single-tenant integration runtimes can be deployed to Microsoft-managed VNets, but can be deployed as well to customer-managed VNets (Self-Hosted Integration Runtimes or SHIR).

- Spark and Data Explorer elements can only be deployed to Microsoft-managed VNets.

- Dedicated and serverless SQL pools are only available as multi-tenant services.

- The same goes for Azure Storage.

Consequently, you will have multiple patterns to secure the traffic between the elements:

- Users will connect to multi-tenant services using private endpoints.

- The self-hosted Integration Runtimes will connect to on-premises resources using its private IP addresses, and to other PaaS services using private endpoints.

- The Spark pools will connect to other PaaS services using managed service endpoints.

- Dedicated or serverless pools will connect to Azure Storage using public IP addresses, and communication will be secured using managed identities ( “allow trusted services”).

For other services, you need to do a similar exercise. For example, the documentation of Azure Machine Learning does a great job at describing the different components of its architecture, and multiple options to interconnect them together.

For your reference…

Now comes the question: for the services I use, what type are they? I was very hesitant with this, because whatever list I come up with will never be complete, and it will be obsolete the day after I write it. Still, I think it could help to some folks, so here we go. I am mentioning the services I work with most frequently, if you want me to look at some other just let me know:

| Service | Multi- tenant | VNet- injected | Managed- VNet- injected | Comments |

| Azure Storage | Y | N | N | |

| App Services | Y | Y | N | ASEv2 and ASEv3 use different technologies for VNet injection |

| Azure Key Vault | Y | N | N | |

| Azure Managed HSM | N | N | Sort of | Integration via ExpressRoute |

| AKS (worker nodes) | N | Y | N | |

| AKS (private cluster API) | N | Y | N | It used to follow the private link pattern |

| ACR | Y | N | N | |

| Azure SQL | Y | N | N | |

| Azure SQL MI | N | Y | N | |

| ADF portal | Y | N | N | |

| ADF integration runtime | Y | Y | Y | SHIRs can be deployed in customer VNets |

| Synapse portal | Y | N | N | |

| Synapse ADX | N | N | Y | |

| Synapse Spark cluster | N | N | Y | |

| Purview portal | Y | N | N | |

| Purview auto-resolve IR | Y | Y | N | |

| Purview SHIR | N | N | Y | |

| HDInsight | N | Y | Y | |

| Databricks | N | Y | Y | |

| Stream Analytics | N | N | Y | Jobs can be deployed to VNets (preview) |

| ADX | N | N | Y | |

| AML compute instances | N | Y | Y | Docs here |

| AML compute clusters | N | Y | Y | Docs here |

| AML compute clusters (AKS) | N | N | Y | Docs here |

| AML managed online endpoint | N | N | Y | Docs here |

| Azure Monitor | Y | N | N | Use AMPLS concept |

| Azure Monitor Log Analytics | Y | N | N | Use AMPLS concept |

| Application Insights | Y | N | N | Use AMPLS concept |

| Application Gateway | N | Y | N | Separate control traffic in preview |

| Azure Firewall | N | Y | N | Separate NIC for control traffic optional |

| Event Hubs | Y | N | N | |

| Service Bus | Y | N | N |

Glossary:

- AKS: Azure Kubernetes Service

- ACR: Azure Container Registry

- ADF: Azure Data Factory

- ADX: Azure Data Explorer

- AML: Azure Machine Learning

- SHIR: Self-Hosted Integration Runtime

Wrapping up…

Hopefully this article armed you with some understanding of the connectivity options you can expect to find when navigating Microsoft’s documentation on PaaS service connectivity.

[…] in which Azure PaaS services can take inbound connections and start outbound flows, see my post Taxonomy of PaaS access for a more detailed […]

LikeLike

Hello Jose,

I finally read this post and it is just great!

Thanks so much

LikeLike

Glad you liked it!

LikeLike