Sometimes you meet an old friend you haven’t seen for many years, and although both of you might have evolved differently during that time, most often than not you find the common ground and the reasons why you loved each other.

Before I get any more sentimental, that is a bit of what I have experienced when playing around the newest version of Cisco Application Centric Infrastructure (this time I am really talking about that ACI), and more concretely, its integration with Microsoft Azure. I haven’t really worked with ACI ever since I finished Deploying ACI, the book I had the honor to write with my friend Frank Dagenhardt, so there were lots of things new for me.

I have to say that I started the exercise with a good amount of scepticism: I normally don’t like having my devices controlled by a multi-vendor application, since those tends to support only a fraction of the available functionality of the objects they manage: for the same reason that I would have never allowed a third-party app to configure my Cisco routers in my network admin days, I have developed a certain allergy to multi-cloud software over the past few years.

So why going through the hassle? What does Cisco ACI put on the table, that you don’t have in Azure already? (note that everything in this post is my own opinion, and not necessarily Microsoft’s or Cisco’s):

- Intent-based security policy decoupled from network design: that is a mouthful, but what does it mean? Traditional networks (and Azure is not too different) use IP constructs to configure network security: your NSGs and Azure Firewall policies mostly use IP addresses, which you need to update whenever your IP schema changes. As we will see later in the post, this is not the case with ACI.

- Automatic inter-region connectivity: this is not too different from Azure Virtual WAN: ACI will deploy a multi-region hub and spoke design, where the whubs are completely managed by the product. It goes one step forward, and it even manages the spokes for you, so the only thing you need to do is creating your Virtual Machines in the right VNet, as we will see later.

- Automatic onprem connectivity: I didn’t test this since I don’t have any actual ACI fabric, so not much I can say about it.

- Same policy in your onprem fabric and in Azure: having the possibility of using the same constructs to define your network policy across multiple fabrics is quite a powerful concept.

Modeling your Application

The first thing you need to do in ACI is defining the network connectivity for your application components. You cannot escape this first step, since as we will see later, ACI starts with no connectivity whatsoever (“secured by design”, as my marketing friends would say).

Do you remember when I said that security policy is decoupled from network design? Welcome to the ACI policy. ACI uses an innovative policy model that you find in other places in the industry too, such as Openstack Group Based Policy. This is my favorite aspect of ACI, but one that can make it difficult to learn.

Let me clarify that this is blog not supposed to be a primer on EPGs, contracts, etc, since there is enough documentation out there doing so. I will try to define the most important concepts, for further details feel free to refer to Cisco docs.

First thing is defining your End Point Groups (EPGs). The neat thing here is that again you can define which VMs belong to which EPG based on different attributes. I have used ARM tags, because that way I can change the EPG for an Azure VM with just a modification of its tags. For my test I am using a 3-tier application (I know, how original), where my web server is this, my app server this, and my database MySQL on an Ubuntu VM. More or less in the middle of the dashboard you see the rule matching for the ARM tag “tier” and the value “web”:

By the way, I just introduced the term APIC (Application Policy Infrastructure Controller), the management plane for Cisco ACI.

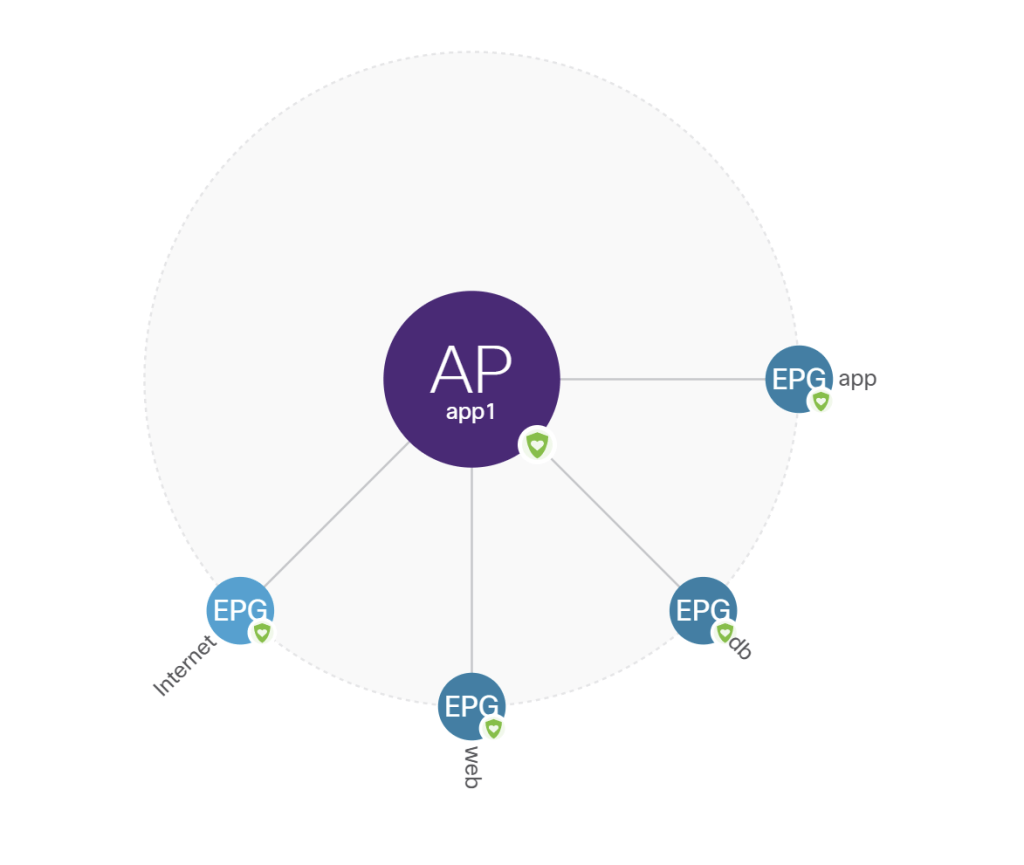

There is one special EPG you need to define to allow access from outside, in ACI terms an “external EPG”. I just defined an eternal EPG called “Internet”, mapped to 0.0.0.0/0 (for stuff outside of ACI you are indeed limited to IP addresses). Here is the application overview visualization showing the 3 application EPGs (one per app tier) plus the external EPG:

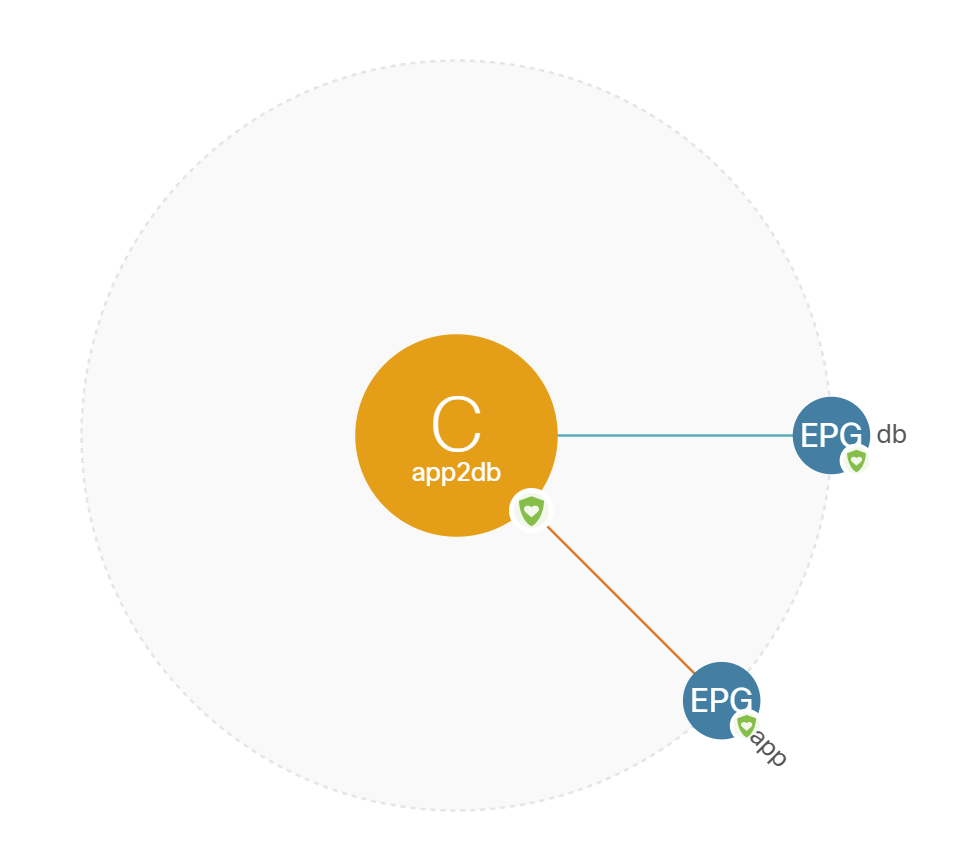

You would then define which connectivity each EPG has with each other EPG. For example, in my example the “app” EPG needs to talk to the “db” EPG on port TCP 3306 (MySQL’s standard TCP port). I created an “app2db” contract associated to a filter that allows port 3306 where the “db” EPG is providing the service (green line in the visualization below) and the “app” EPG is consuming the service (red line):

The first time you are confronted with this, the first reaction is usually “wow that is a lot of strange words just to define and access list”. But please consider what we have done: we have a model that describes the policy for our application, and that model is independent of the underlying technology, number of Azure regions or onprem sites or IP address scheme.

Azure Regions

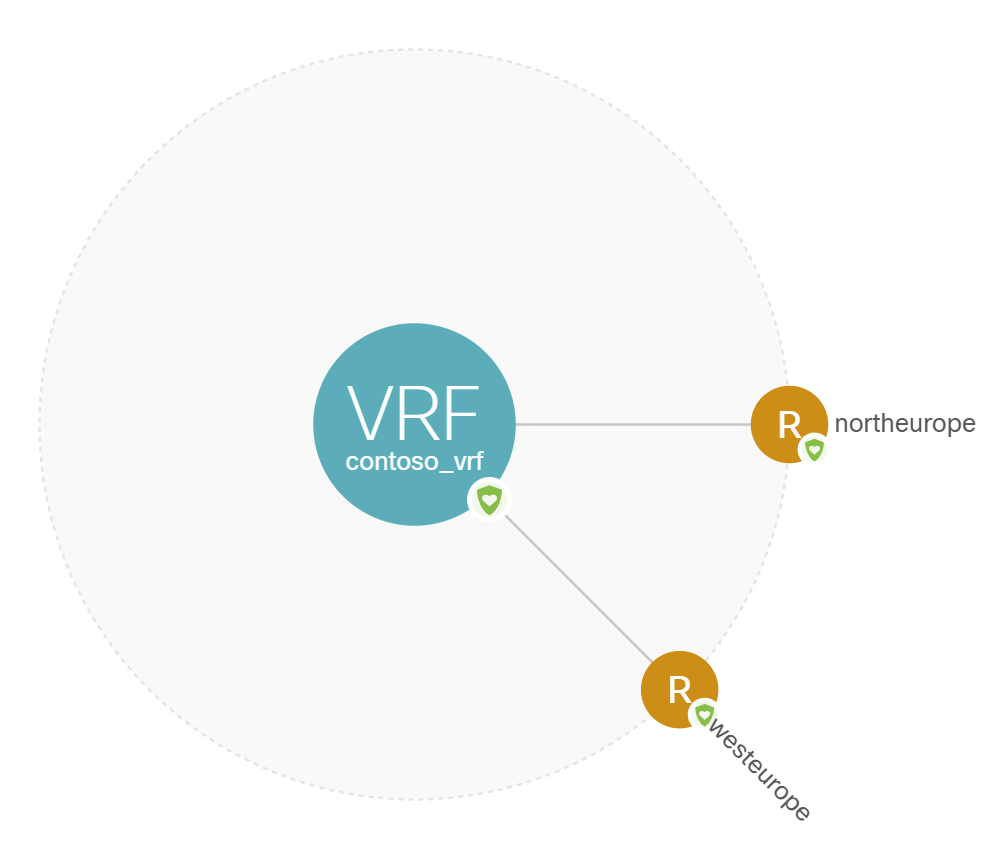

In ACI you will need to specify in which regions you would like it to create the hub and spoke design. In my example I picked North Europe and West Europe.

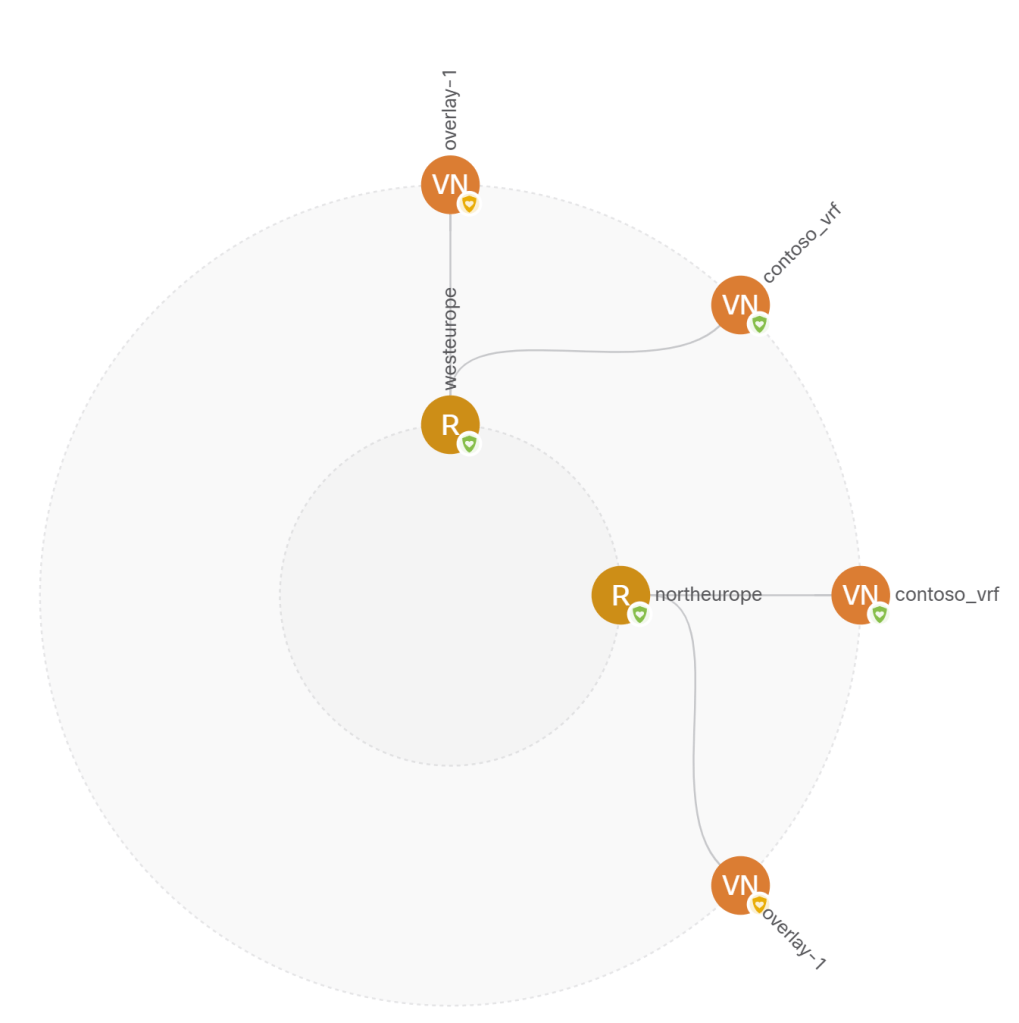

You can configure as well if you want inter-region connectivity, which I of course did, and that is neatly shown in a world map:

In this last representation you can see the Virtual Networks that the APIC has created: one hub in each region, and one spoke:

Let me introduce now the concept of tenants and VRFs: without going into too much detail, ACI is a multitenant system. You can configure one or many tenants, each mapped to the same or diferent subscriptions. For example, in an Azure Enterprise Scale environment you would want different workloads in different subscriptions, which would map to different tenants in ACI.

One tenant can have one or more VRFs, which are IP address spaces isolated from each other (although you can break that isolation with some advanced configuration). But let’s go back to our simple scenario where we have one single tenant (“contoso”) and one single VRF (“contoso_vrf”).

Translation: ACI-Azure, Azure-ACI

The APIC created the hub and spoke topology in the regions configured, so the only thing to do next is creating the Virtual Machines in the corresponding VNet, and tag them so that ACI knows to which EPG they belong. The following screenshot shows the web VM tagged with tier:web:

One easy way to check that the EPG assignment worked is having a look at the Application Security Groups assigned to the VM’s NICs. the following screenshot shows the “web_cloudapp-app1”, which was configured by they APIC automatically:

So how are the contracts between EPGs actually implemented in Azure? Through a Network Security Group deployed at the subnet level. The NICs themselves have no NSG, the subnet NSG is common for all VMs in the same application (there might be some implications due to the maximum number of rules per NSG, which is 1,000 as documented in the Azure Networking Limits).

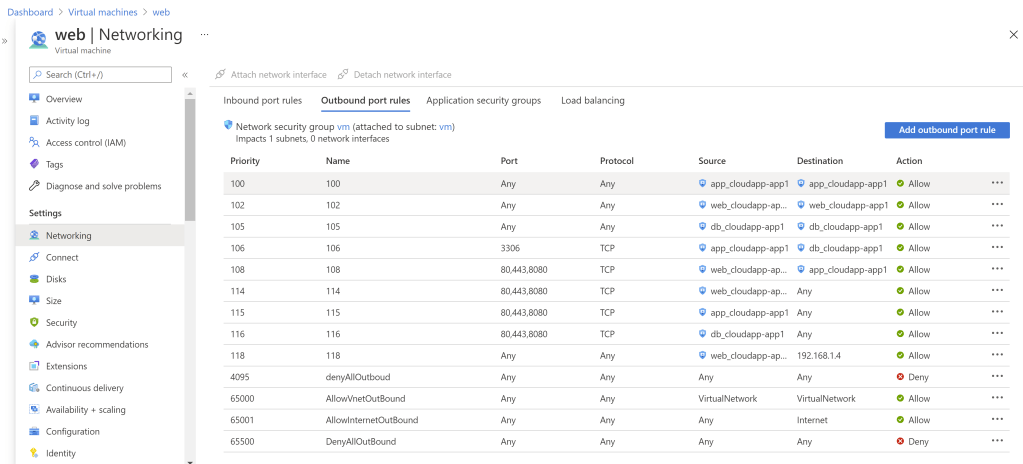

There is a lot to unpack in the following screenshot. The first thing to notice is rule 4095, an implicit deny for every packet, which overrides Azure default NSG rules to permit intra-VNet traffic.

We already saw that the APIC automatically configured Application Security Groups in the VMs identified as belonging to specific ASGs, and you can see in the VM inbound rules how the contracts map to the NSG configuration:

- Rules 101, 103 and 104 allows all traffic inside of the “app”, “web” and “db” EPGs respectively. This default behavior of ACI to allow intra-EPG communication can be modified if required.

- Rule 107 is the result of the “app2db” contract, to allow the “app” EPG to communicate to the “db” on the MySQL port 3306.

- Rule 109 allows “web” VMs to talk to “app” VMs on web ports (the app offers a REST API)

- Rule 110 allows the external EPG “Internet” to communicate to the “web” VMs on web ports

- And finally, rules 111, 112 and 113 are the result of me having an additional contract between Internet and all EPGs so that I can connect to my VMs, but in a production setup you would not have this.

You might be asking yourself about rules 117 and 119. They contain an IP address, what is it? For test purposes I have deployed a second web server in a different region. As you might know, Application Security Groups only work for rules between VMs in the same VNet, so APIC is forced to use IP addresses here because of this Azure limitation:

- Rule 117 is the complement to rule 109, so that the “web” VMs in the remote region (in this case only 192.168.1.4) can talk to the “app” VMs in this region

- And rule 119 is the complement to rule 101, so that the “web” VM in the remote region can talk to the “web” VMs in the local region, since they are all in the same EPG.

The outbound rules can be similarly mapped back to the contracts between the application EPGs, which I will not do here though, for the sake of brevity:

Hub and Spoke Topology

Interestingly enough, VMs can be deployed in any of the supported regions for this configuration (North and West Europe), and they will be able to communicate together as described by the application contracts. But how does IP forwarding work across the regions?

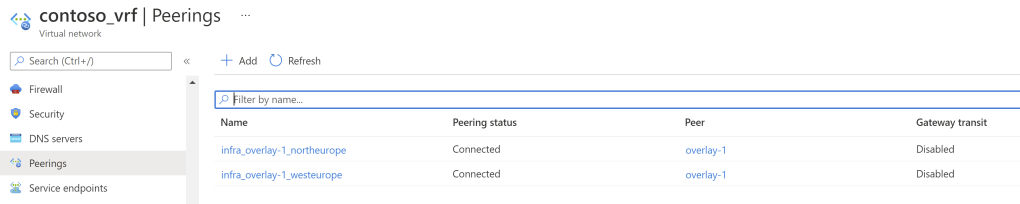

As described earlier, APIC created two workload VNets for our tenant VRF (spokes in Azure parlance). Looking into the peerings of one of them, you can see that it is peered to two other VNets: those are the hub VNets in each region:

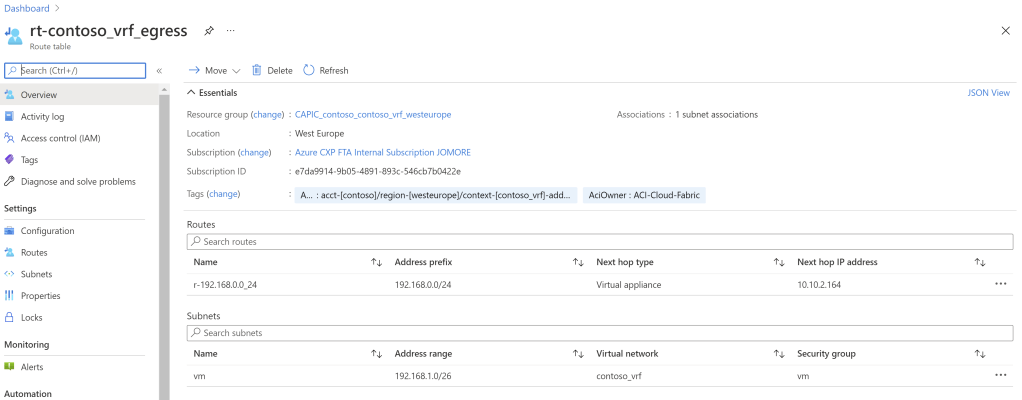

Additionally, in the subnet where the workloads are deployed there is a route table associated, teaching the VMs in each region how to reach the VMs in the other regions. In West Europe there is a route for 192.168.0.0/24 (North Europe), and in North Europe you find the opposite route.

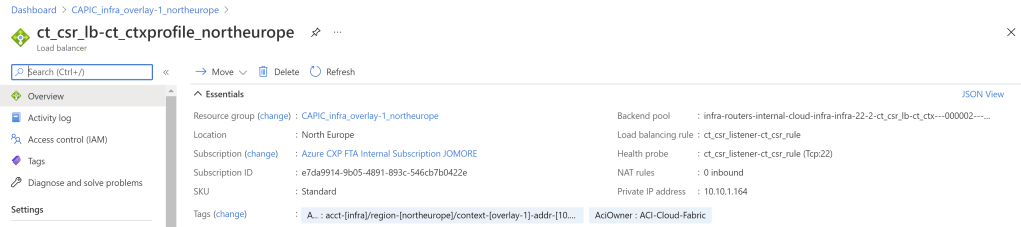

What are those IP addresses in the next hop fields? If we look at the route in North Europe, it is pointing to 10.10.1.164. This corresponds to an Azure Load Balancer deployed in the North Europe hub VNet:

The Azure Load Balancer sends traffic to two Cisco CSRs deployed as Network Virtual Appliances in the hub VNet, that will handle the inter-region communication as well as the connectivity to onprem (which I don’t have in my lab scenario):

A minor remark is that these routers are deployed with Availability Sets instead of Availability Zones, not sure if you can configure that anywhere in the APIC.

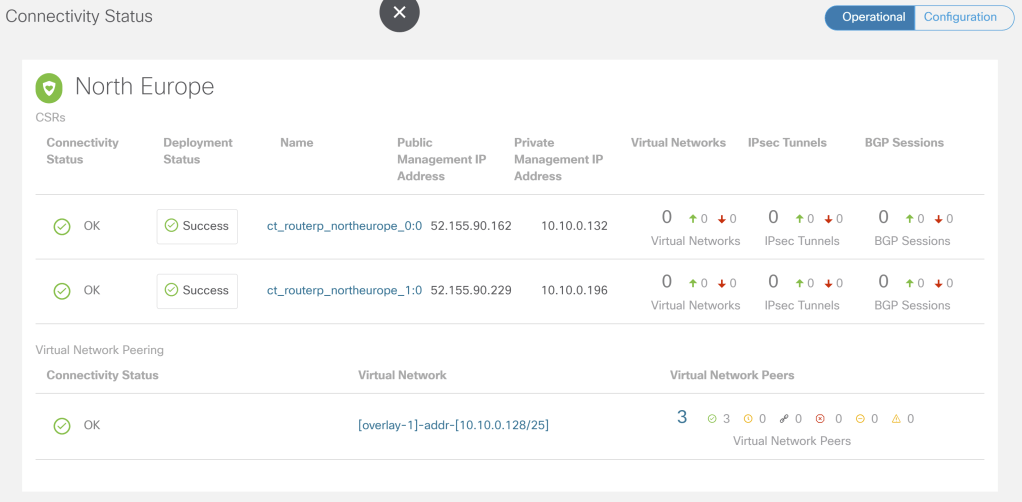

In APIC you can see as well the state of these routers. As you can see, there are some counters for IPsec tunnels and BGP session, that would start increasing when connecting to onprem networks:

One thing important to mention is that these CSRs are deployed with multi-tenancy functionality. In this example I only have one application tenant (Contoso), but you see other 3 VRFs used by the system, so that control and management plane traffic are separated from application traffic:

ct-routerp-northeurope-0-0#sh ip vrf

Name Default RD Interfaces

GS 100:100 Vi0

contoso:contoso_vrf 10.10.0.180:8 BD8

infra:overlay-1 <not set>

management <not set> Gi1

Adding Up

I would like to congratulate the Cisco ACI team: I was frankly impressed of how flawless the rendering of the ACI policy to Azure was, leveraging native Azure constructs such as VNet peerings, ASGs and route tables. In the absence of a true intent-based network policy framework in Azure, Cisco ACI is the best tool for the job in my humble opinion.