I see more and more organizations deploying workloads across different clouds, and some times those workloads need to communicate between each other. There are multiple options to connect clouds together, the cheapest being an encrypted network tunnel over the public Internet, also known as IPsec VPN.

All clouds support deploying your favorite network vendor as a compute instances, either traditional networking virtual appliances, SD-WAN products or other newer cloud-native technologies. For example, I really like Aviatrix and their Insane Mode Encryption (I love that feature name BTW), which is not capped to 1.25 Gbps per tunnel as most other IPsec implementations. Another option is using the native VPN constructs in each cloud, and this is what I will cover in this post for AWS, Azure and Google Cloud.

I will be using each cloud’s CLI to create and diagnose resources, if you want to have a deeper peek you can see the whole script to create this environment in my Github repo.

IPsec+BGP between Azure and AWS

First of all, this post is not trying to reinvent the wheel. There are other excelent writeups out there, such as the blog post of my esteemed colleague Ricardo Martins on How to create a VPN between Azure and AWS (with static routing), or the official Microsoft documentation on How to connect AWS and Azure using a BGP-enabled VPN gateway, which is closer to how I am going to approach things (in my opinion dynamic routing is always a good idea). However I will focus on CLI and not on the portal (you know I am a CLI fan if you have read other blog posts of mine), and more importantly, on WHY certain configurations are required.

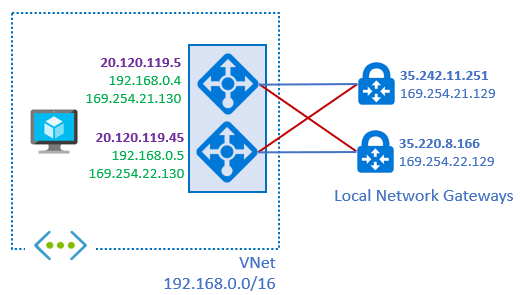

Azure and AWS have very significant differences, which will drive most of the configuration complexities we will see later. The main discrepancy is the way in which IP addresses are assigned: while Azure assigns IP addresses at the device level (read here loopbacks and unnumbered tunnel interfaces), AWS assigns the IP addresses at the tunnel interface level, as the following picture shows:

Let’s start with the similarities though: both Azure and AWS VPN gateways are actually deployed over two “instances” for high availability. In Azure you can choose to “hide” the second instance, effectively going for an active/passive setup, but this is typically not recommended because of the higher convergence times.

Each Azure instance gets a single public IP address, regardless how many tunnels you configure. As a consequence, you will define two Customer Gateways (CGW) in AWS, one for each of these public IPs:

However, as you can see in the following output, each AWS IPsec tunnel gets its own public IP address, so we will have a total of four public IPs in AWS (this output has been taken with the whole environment configured, that is why the tunnels show already to be up):

❯ aws ec2 describe-vpn-connections --query 'VpnConnections[*].VgwTelemetry[*].[OutsideIpAddress,StatusMessage,Status]' --output text 34.236.200.191 1 BGP ROUTES UP 35.172.156.144 1 BGP ROUTES UP 34.200.189.116 1 BGP ROUTES UP 35.171.130.205 1 BGP ROUTES UP

Hence, we will need four Local Network Gateways (LNGs) in Azure, each with the corresponding public and private IP addresses of the AWS tunnel endpoint:

❯ az network local-gateway list -g "$rg" -o table Name Location ResourceGroup ProvisioningState GatewayIpAddress AddressPrefixes ------ ---------- --------------- ------------------- ------------------ ----------------- aws00 eastus multicloud Succeeded 34.200.189.116 aws01 eastus multicloud Succeeded 34.236.200.191 aws10 eastus multicloud Succeeded 35.171.130.205 aws11 eastus multicloud Succeeded 35.172.156.144

When configuring the LNGs in Azure you will wonder which private IP addresses to configure for the AWS gateways. You need to know that in AWS you only configure the subnet assigned to each IPsec connection, and AWS will pick the first address for each tunnel. For example, if the first tunnel has the subnet 169.254.21.0/30, the AWS gateway will pick the .1, and will expect the other side to have the .2.

Another aspect important to understand is that both in Azure and AWS, when you define a LNG/CGW, both gateway instances will try to connect to it, practically creating two tunnels for every configured LNG/CGW. So if in Azure we configure 4 LNGs, it means that the instances will try to establish 8 IPsec tunnels with 8 BGP adjacencies, out of which only half of them will actually work, as the following diagram shows:

This can be seen for example looking at the BGP peers of each VNG instance, that show which BGP adjacencies are up and which ones are not. For example, for the first VNG instance (identified by its default BGP IP address of 192.168.1.4):

❯ az network vnet-gateway list-bgp-peer-status -n $vpngw_name -g $rg -o table --query 'value[?localAddress == `'$vng0_bgp_ip'`]' LocalAddress Neighbor Asn State RoutesReceived MessagesSent MessagesReceived ConnectedDuration -------------- ------------ ----- ---------- ---------------- -------------- ------------------ ------------------- 192.168.1.4 169.254.21.1 65002 Connected 1 3867 3391 09:23:46.1155616 192.168.1.4 169.254.21.5 65002 Connected 1 3848 3377 09:21:38.0064097 192.168.1.4 169.254.22.1 65002 Connecting 0 0 0 192.168.1.4 169.254.22.5 65002 Connecting 0 0 0 192.168.1.4 192.168.1.4 65001 Unknown 0 0 0 192.168.1.4 192.168.1.5 65001 Connected 3 5835 5830 3.11:18:40.3414080

As an additional comment to the previous output, 192.168.1.5 is the second instance, with which the first one talks over iBGP. 192.168.1.4 is actually itself, so the adjacency cannot work (shown by the state of Unknown).

We can have a look at the BGP peers of the second instance. Here again, two neighbors will remain stuck at the “Connecting” state (the 169.254.21.x ones, reachable only from the first VNG instance), while the other two have established BGP adjacencies:

❯ az network vnet-gateway list-bgp-peer-status -n $vpngw_name -g $rg -o table --query 'value[?localAddress == `'$vng1_bgp_ip'`]' LocalAddress Neighbor Asn State ConnectedDuration RoutesReceived MessagesSent MessagesReceived -------------- ------------ ----- ---------- ------------------- ---------------- -------------- ------------------ 192.168.1.5 169.254.22.1 65002 Connected 09:24:22.9616760 1 3873 3394 192.168.1.5 169.254.22.5 65002 Connected 09:19:21.8813519 1 3838 3361 192.168.1.5 169.254.21.1 65002 Connecting 0 0 0 192.168.1.5 169.254.21.5 65002 Connecting 0 0 0 192.168.1.5 192.168.1.4 65001 Connected 3.11:19:35.9336075 3 5829 5838 192.168.1.5 192.168.1.5 65001 Unknown 0 0 0

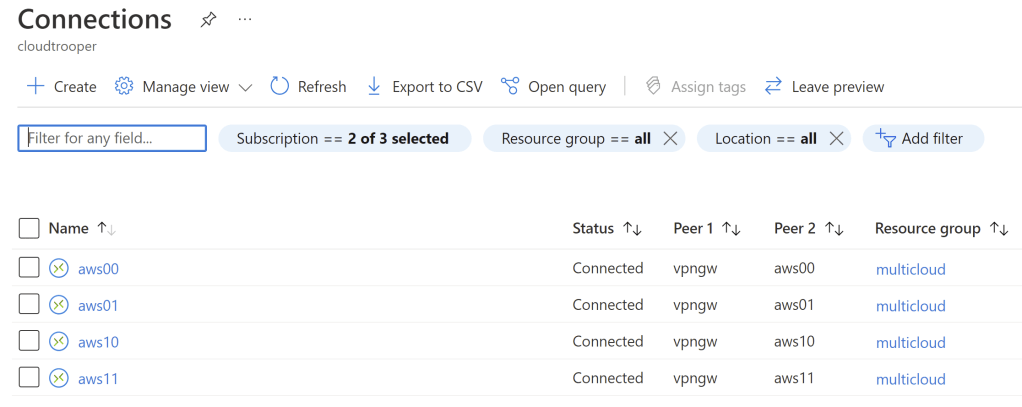

There is one interesting question: if for every LNG and every connection two tunnels will be attempted, but only one will work, what will be the state of the connections? Here the CLI and the Azure portal seem to defer. In the portal all four connections will be shown as “Connected”:

However, the CLI is a bit pickier, and inconsistent (one connection shows as Connected, the other three do not). As a consequence, the BGP adjacencies stay the best way to definitively answer the question whether connectivity is working or not:

❯ az network vpn-connection list -g "$rg" -o table --query '[].{Name:name,EgressBytes:egressBytesTransferred,IngressBytes:ingressBytesTransferred,Mode:connectionMode,Status:tunnelConnectionStatus[0].connectionStatus,TunnelName:tunnelConnectionStatus[0].tunnel}'

Name EgressBytes IngressBytes Mode Status TunnelName ------ ------------- -------------- ------- --------- ------------------

aws00 0 0 Default aws01 0 0 Default

aws10 18720 3780 Default Connected aws10_20.120.119.5

aws11 0 0 Default

Once the BGP adjacencies are established, the routes will start flowing to each side. In AWS you can verify that the routes are coming in:

❯ aws ec2 describe-route-tables --query 'RouteTables[*].Routes[].[State,DestinationCidrBlock,Origin,GatewayId]' --output text active 172.31.0.0/16 CreateRouteTable local active 0.0.0.0/0 CreateRoute igw-07b61238827895a2b active 172.16.0.0/16 CreateRouteTable local active 0.0.0.0/0 CreateRoute igw-095a50bd8954ecd80 active 10.0.1.0/24 EnableVgwRoutePropagation vgw-010c1ae480e88cd01 active 192.168.0.0/16 EnableVgwRoutePropagation vgw-010c1ae480e88cd01 active 192.168.0.0/16 CreateRouteTable local active 172.16.0.0/16 CreateRouteTable local

In Azure you can have a look at the effective routes of a NIC for a similar verification:

❯ az network nic show-effective-route-table -n testvmVMNic -g $rg -o table

Source State Address Prefix Next Hop Type Next Hop IP

--------------------- ------- ---------------- --------------------- -------------

Default Active 192.168.0.0/16 VnetLocal

VirtualNetworkGateway Active 172.16.0.0/16 VirtualNetworkGateway 192.168.1.4

VirtualNetworkGateway Active 172.16.0.0/16 VirtualNetworkGateway 192.168.1.5

Default Active 0.0.0.0/0 Internet

Default Active 10.0.0.0/8 None

Default Active 100.64.0.0/10 None

[...]

And sure enough ping works alright from our Azure VM to the AWS EC2 instance, assuming your Azure NSGs and AWS ACLs and SGs are configured accordingly:

jose@testvm:~$ ping 172.16.1.223 PING 172.16.1.223 (172.16.1.223) 56(84) bytes of data. 64 bytes from 172.16.1.223: icmp_seq=7 ttl=254 time=5.13 ms 64 bytes from 172.16.1.223: icmp_seq=8 ttl=254 time=3.52 ms 64 bytes from 172.16.1.223: icmp_seq=9 ttl=254 time=4.39 ms

Adding a VPN to Google Cloud

Now that we have connectivity between Azure and AWS, why stopping there? If you have some real estate in Google, chances are that you will want to do something similar. The VPN concepts in Google Cloud are really interesting, mostly because they introduce a new element, the cloud router:

Another very interesting innovation is the fact that you can define a single External VPN Gateway with two public IP addresses, so that Google’s VPN Gateway knows that it does not need to build two tunnels from each instance, but only one (to each public IP in the External VPN Gateway). Once the configuration above is in place, two tunnels will be shown from the Google VPN Gateway perspective:

❯ gcloud compute vpn-tunnels list NAME REGION GATEWAY PEER_ADDRESS azvpngw0 us-east1 vpngw 20.120.119.5 azvpngw1 us-east1 vpngw 20.120.119.45

The default output for the previous command does not show the tunnel status, but a bit of jq magic can help us there:

❯ gcloud compute vpn-tunnels list --format json | jq -r '.[] | {name,peerIp,status,detailedStatus}|join("\t")'

azvpngw0 20.120.119.5 ESTABLISHED Tunnel is up and running.

azvpngw1 20.120.119.45 ESTABLISHED Tunnel is up and running.

The most interesting fact about Google’s implementation is that IPsec termination and BGP routing are separated. While the VPN Gateways terminate the IPsec tunnels, the BGP adjacencies are terminated in the Cloud Routers:

❯ gcloud compute routers get-status $gcp_router_name --region=$gcp_region --format='flattened(result.bgpPeerStatus[].name,result.bgpPeerStatus[].ipAddress, result.bgpPeerStatus[].peerIpAddress)' result.bgpPeerStatus[0].ipAddress: 169.254.21.129 result.bgpPeerStatus[0].name: azvpngw0 result.bgpPeerStatus[0].peerIpAddress: 169.254.21.130 result.bgpPeerStatus[1].ipAddress: 169.254.22.129 result.bgpPeerStatus[1].name: azvpngw1 result.bgpPeerStatus[1].peerIpAddress: 169.254.22.130

In Azure we will again need two Local Network Gateways (one for each public IP address of Google’s VPN gateways), which will once more cause half of the tunnels not being built, since Google will only answer on two of them:

The best way to inspect this is again in the VNG BGP peer table. Here the BGP neighbors of the first Azure VNG instance, where you can see that additionally to the AWS BGP peers now we have the two Google Cloud peers (169.254.21.129 and 169.254.22.129), of which only the first one works for this first VNG instance:

❯ az network vnet-gateway list-bgp-peer-status -n $vpngw_name -g $rg -o table --query 'value[?localAddress == `'$vng0_bgp_ip'`]' LocalAddress Neighbor Asn State RoutesReceived MessagesSent MessagesReceived ConnectedDuration -------------- -------------- ----- ---------- ---------------- -------------- ------------------ ------------------- 192.168.1.4 169.254.21.129 65003 Connected 1 68 56 00:17:10.8890031 192.168.1.4 169.254.22.129 65003 Connecting 0 0 0 192.168.1.4 169.254.21.1 65002 Connected 1 4329 3793 10:29:48.0256095 192.168.1.4 169.254.21.5 65002 Connected 1 4306 3779 10:27:39.9164576 192.168.1.4 169.254.22.1 65002 Connecting 0 0 0 192.168.1.4 169.254.22.5 65002 Connecting 0 0 0 192.168.1.4 192.168.1.4 65001 Unknown 0 0 0 192.168.1.4 192.168.1.5 65001 Connected 5 5937 5932 3.12:24:42.2514559

And sure enough, the second VNG instance will show us that the Google Router adjacency to 169.254.22.129 will be up:

az network vnet-gateway list-bgp-peer-status -n $vpngw_name -g $rg -o table --query 'value[?localAddress == `'$vng1_bgp_ip'`]' LocalAddress Neighbor Asn State ConnectedDuration RoutesReceived MessagesSent MessagesReceived -------------- -------------- ----- ---------- ------------------- ---------------- -------------- ------------------ 192.168.1.5 169.254.21.129 65003 Connecting 0 0 0 192.168.1.5 169.254.22.129 65003 Connected 00:16:47.4180498 1 67 54 192.168.1.5 169.254.21.1 65002 Connecting 0 0 0 192.168.1.5 169.254.21.5 65002 Connecting 0 0 0 192.168.1.5 169.254.22.1 65002 Connected 10:30:12.5371733 1 4329 3795 192.168.1.5 169.254.22.5 65002 Connected 10:25:11.4568492 1 4296 3762 192.168.1.5 192.168.1.4 65001 Connected 3.12:25:25.5091048 5 5931 5940 192.168.1.5 192.168.1.5 65001 Unknown 0 0 0

We can inspect the routes that come into the Google router, to verify that Azure VNGs are advertising the Azure VNet prefix (192.168.0.0/16):

❯ gcloud compute routers get-status $gcp_router_name --region=$gcp_region --format=json | jq -r '.result.bestRoutesForRouter[]|{destRange,routeType,nextHopIp} | join("\t")'

172.16.0.0/16 BGP 169.254.21.130

192.168.0.0/16 BGP 169.254.21.130

172.16.0.0/16 BGP 169.254.22.130

192.168.0.0/16 BGP 169.254.22.130

Google VPC prefix (10.0.1.0/24) will be seen as learnt by the VNGs too, you can either inspect the learned prefixes by the VNG with the az network vnet-gateway list-learned-routes command, or just check the effective routes of a VM in the VNet, as we did in the AWS example:

❯ az network nic show-effective-route-table -n testvmVMNic -g $rg -o table

Source State Address Prefix Next Hop Type Next Hop IP

--------------------- ------- ---------------- --------------------- -------------

Default Active 192.168.0.0/16 VnetLocal

VirtualNetworkGateway Active 172.16.0.0/16 VirtualNetworkGateway 192.168.1.4

VirtualNetworkGateway Active 172.16.0.0/16 VirtualNetworkGateway 192.168.1.5

VirtualNetworkGateway Active 10.0.1.0/24 VirtualNetworkGateway 192.168.1.4

VirtualNetworkGateway Active 10.0.1.0/24 VirtualNetworkGateway 192.168.1.5

Default Active 0.0.0.0/0 Internet

Default Active 10.0.0.0/8 None

Default Active 100.64.0.0/10 None

[...]

And as expected (by the way, I might or might not have forgotten to add the Google firewall rules to allow ICMP to the VPC on my first try), our Azure VM can now reach Google compute instances:

jose@testvm:~$ ping 10.0.1.2 PING 10.0.1.2 (10.0.1.2) 56(84) bytes of data. 64 bytes from 10.0.1.2: icmp_seq=1 ttl=63 time=16.7 ms 64 bytes from 10.0.1.2: icmp_seq=2 ttl=63 time=14.9 ms

Adding up

So we have seen that it is possible adding IPsec tunnels with dynamic routing between AWS, Azure and Google Cloud using the native constructs of each cloud. While these native cloud services are deeply integrated with the rest of the cloud’s ecosystem (APIs, monitoring, etc), you need to be aware of each cloud’s particularities when connecting them together, which drives certain complexity. If you need a more consistent cross-cloud setup, you might be rather looking at deploying your favorite networking vendor’s appliances as virtual instances in your VNets/VPCs.

The option is yours. Which one would you pick? Thanks for reading!

Hi Jose, thanks for this great interview. One question though, I see a lot of Azure customers using Virtual WAN, is there a specific reason you did not mention this in your overview?

LikeLike

Hey Mark, sure! Good question, and BTW, you could have asked as well about AWS TGW, AWS Cloud WAN or Google’s Network Connectivity Center.

In the case of Virtual WAN there is an easy explanation: Virtual WAN VPN gateways do not support yet multiple APIPAs, which is critical to connect to AWS (with GCP a single APIPA works just fine).

LikeLike

Instead of dealing with all this complexity, I would use point and click (or Terraform) solution from Aviatrix and will build Multi-Cloud connectivity in minutes. You can try here https://community.aviatrix.com/t/g9hx9jh

LikeLike

Hey netJoints, I am well aware of the Aviatrix solution, and you might have noticed that I even refer to it at the beginning of the blog post. By the way, your point is not only valid for Aviatrix, but for any network virtual appliance as well, SDWAN or not. For example, you could configure an IPsec+BGP overlay with Linux VMs too.

This post is not trying to say that the native approach is the best for everybody, but there are situations where it does make sense.

Claiming that any given technology or vendor is the best no matter what, is a recipe for failure, as experience has shown me countless times in my career.

LikeLike

Very awesome blog post, thanks for putting this together! I agree with your statement re Aviatrix – it’s not the solution for everything it’s just another solution which has its own pros and cons.

LikeLike

Happy you liked it!

LikeLike

Appreciate the article! Cloud to cloud connections are becoming a big part of what I’m starting to design so really helpful. Thx!

LikeLike

Happy these are helpful Adam!

LikeLike

Hi Jose,

Looks like I try the same but with onPrem and expressroute private vpn, instead of aws or gcp.But running into problems with the BGP next-hop ip from the azure private ip.Have you also encountered such a problem ?

for example I have 2 BGP peers within the vpn tunnel private IPs.onPrem-1: 169.254.21.1 — azure 169.254.21.2onPrem-2: 169.254.22.1 — azure 169.254.22.2

I expect to get the prefixes from BGP peer 169.254.21.2 with BGP next hop 169.254.21.2.But this chanege over time or at least when doing some failover tests (cutting onPrem connection/interface for expressroute)So I will get prefixes from BGP peer 169.254.21.2 with BGP netxt hop 169.254.22.2 and also for onPrem-2 the other way around.

LikeLike

I like thinking about the BGP addresses of Azure VPN gateways as loopbacks, and the tunnels as unnumbered.

When connecting Azure VPN to AWS or Google you need to make Azure mimic their approach (IPs in the interfaces), but to connect to on prem you don’t need that, since you can configure your interfaces as unnumbered too.

LikeLike